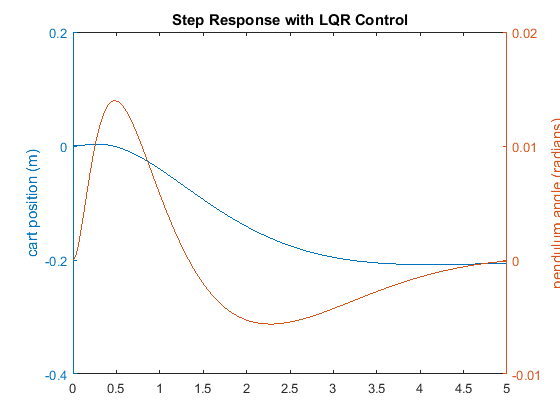

Inverted pendulum angle goes beyond +/- 10 deg, |α| > π/18.The training episode is stopped when any of these conditions holds: The stop signals are used to stop the training session if the system does not satisfy certain conditions. The qr reward subsystem contains the reward and stop signals. A model-based RL training method is being used for this application but there are model-free methods that use the actual hardware in the training process. Nonlinear models represent the hardware dynamics more accurately and over a larger range. The environment used to train the agent is a nonlinear dynamic model of the QUBE-Servo 2 system and is defined in the Simulink QUBE-Servo 2 Pendulum Model block. The main RL components such as the reward, observations, and environment shown in Figure 1 are defined in the Simulink model above. Figure 3: Simulink model used to train agent for QUBE-Servo 2 Inverted Pendulum system The Simulink® model used to train the RL agent for this system is shown below. The QUBE-Servo 2 Inverted Pendulum system, shown below, has two encoders to measure the position of the rotary arm (i.e., the DC motor angle) and the pendulum link and a DC motor at the base of the rotary arm. QUBE-Servo 2 Inverted Pendulum Reinforcement Learning Design The workflow will be applied to the QUBE-Servo 2 Inverted Pendulum application in the next section. The steps to design a reinforcement learning agent can be summarized as follows: Actions are the set of the control signals (e.g., motor voltage), as determined by the policy, going to the plant.Observations are the set of measured signals (e.g., position and velocity of a DC motor) that are used by the RL algorithm, reward function, and policy.It is similar to a cost function used in Linear Quadratic Regulator (LQR) based control except a reward function tries to maximize the value (i.e., not minimize it). Reward is a function used by RL to know when the system is approaching its objective or task, e.g., balancing the pendulum.

Reinforcement Learning Algorithm: Updates the policy based on the observations and reward signals, e.g., as the environment changes.Different policies are akin to different types of control methods, e.g., PI, PD, PID. Policy uses observations and reward signal from the environment and based on these, applies action to the environment.This includes the plant but also any other effects such as measurement noise, disturbances, filtering, and so on. Environment is everything that exists outside the agent.Here is a brief description of the components involved: Figure 1: Reinforcement learning components in control system applications. The main components in reinforcement learning to control a dynamic system like a water tank, DC motor, or active suspension system is illustrated in Figure 1. Given its popularity, I thought I use the Reinforcement Learning Toolbox™ by MathWorks to balance the pendulum of a Quanser QUBE-Servo 2. Reinforcement learning is used in many different applications, such as training computer programs to perform certain tasks to autonomous vehicles. Reinforcement learning (RL) is a subset of Machine Learning that uses dynamic data, not static data like unsupervised learning or supervised learning. Industrial Applications & Process Control.There is only one data set used for estimation in this example. The measured data in Estimation is shown in the experiment plot. It is also configured with validation data Validation which we will use later, after estimation. For other uses you can import experimental data sets from various sources including MATLAB® variables, MAT files, Excel® files, or comma-separated-value files. This is configured with measured experiment data Estimation. Estimation Dataĭouble-click the orange block in the upper left corner of the inverted pendulum model to launch the Parameter Estimator, pre-loaded with data for this project. This allows us to customize our estimation and can result in a more efficient solution. The outputs of the system are the angles of the arm and the pendulum.įor this example we will run two estimations using different parameter sets for each estimation. For this example damping is modeled in the revolute joints using gains Kda and Kdp. The arm of the pendulum has mass Ma, inertia Jb and length r. The motor is modeled as a torque gain Kt. An input voltage is delivered to a DC motor that provides the torque to the rotational arm. The bodies are connected by revolute joints that constrain the motion of the bodies relative to each other. The machine consists of one body representing the rotational arm and the other representing the pendulum. There are two bodies modeled in this system. The system is modeled using Simscape Multibody.